Thousands of Zero-Days Are About to Go Public. Is Your WAF Ready?

CVSS scores assume exploiting vulnerabilities requires human expertise. Anthropic's Mythos just produced thousands of working exploits for $2K each. Every "medium" in your backlog is now effectively a "high." Here's the data and what to do about it.

Every risk score in your vulnerability scanner is wrong.

Not slightly off. Fundamentally wrong. CVSS scores are built on one core assumption: exploiting vulnerabilities requires expensive human expertise. That assumption died this month.

Anthropic's Claude Mythos Preview just produced thousands of working zero-day exploits, autonomously, for about $2,000 each. The "medium severity, high attack complexity" CVEs your team has been deprioritizing? A model can now exploit them on demand.

This post shows what broke, why your current risk model no longer holds, and what to do about it.

Your risk scores assume human attackers

When a CVE gets rated "medium" with "high attack complexity," that score assumes a human attacker who needs specialized skills and months of work to build a working exploit.

Mythos doesn't need months. It chains multiple vulnerabilities, builds ROP chains across sequential network packets, and bypasses memory protections, all autonomously. A CVE rated 6.5 because it requires complex exploitation is effectively a 9+ when a model can build a working exploit for $2,000.

Every medium CVE in your backlog just became a high. Every high just became a critical. The framework the industry uses to decide what to patch first is calibrated for a world that no longer exists.

Here's the evidence.

Exploit development just got industrialized

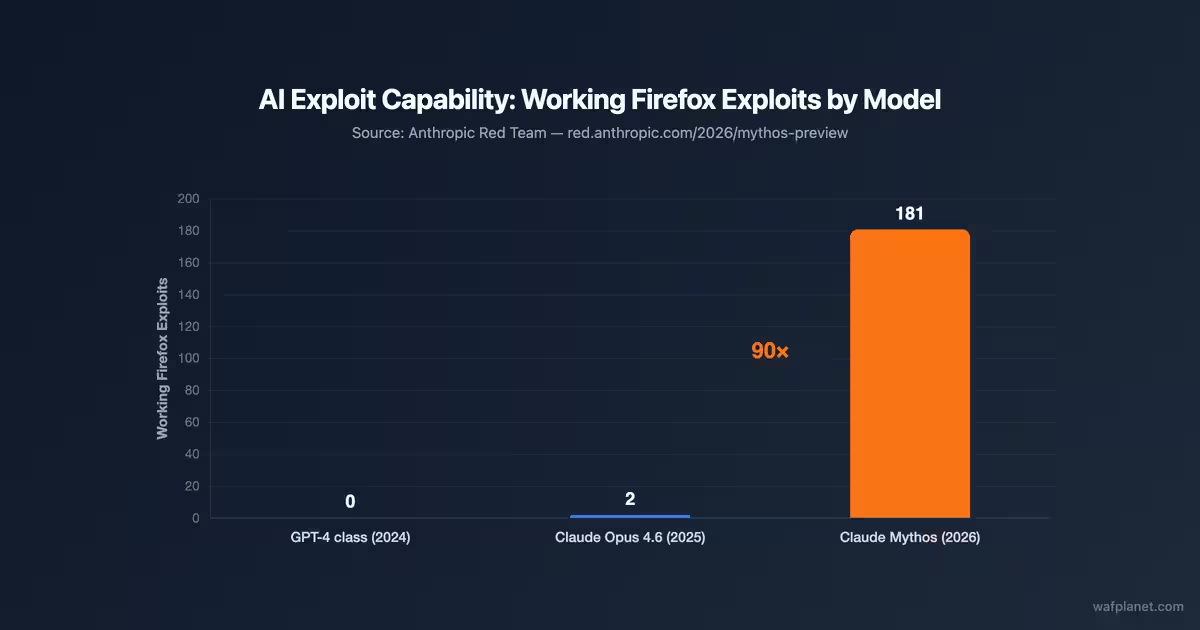

Anthropic benchmarked Mythos against the previous best model (Claude Opus 4.6) on the same Firefox exploit target. Opus 4.6 produced 2 working exploits. Mythos produced 181.

Working Firefox exploits by AI model generation. Source: Anthropic Red Team

That's a 90x improvement in a single model generation. And Mythos is just the beginning. Other models will cross this threshold. When they do, these numbers multiply. Exploit development just went from artisan craft to industrial output.

Google Project Zero, the best vulnerability research team on Earth, finds a few hundred significant bugs per year with 20-30 elite researchers. Mythos matched that volume in weeks. It found a 27-year-old bug in OpenBSD, a 17-year-old remote root in FreeBSD (then built a 20-gadget ROP chain to exploit it), and a 16-year-old heap overflow in FFmpeg. Cost per exploit chain: $1,000 to $2,000.

To understand what that price means, look at what these bugs are worth on the open market.

Vulnerability broker prices (Zerodium public pricing) vs. Mythos cost per exploit. Log scale.

A zero-day that sells for $500K to $2.5M on the broker market now costs $2,000 to find. The scarcity that made zero-days valuable is evaporating.

But price is only half the story. Look at volume.

Zero-day vulnerabilities detected as exploited in the wild per year (blue), tracked by Google Threat Intelligence Group (GTIG). Orange: zero-days discovered by Mythos in a single assessment (with working PoC exploits, not yet disclosed).

According to Google's Threat Intelligence Group, the entire global offensive security ecosystem, every government, every criminal group, every spyware vendor combined, produces 60 to 106 zero-days per year that get detected as exploited in the wild. That's the combined annual output of every nation-state program on Earth. Mythos found over 2,000 in a single run.

Can your WAF keep up?

Over 99% of what Mythos found is still unpatched. Anthropic's coordinated disclosure gives vendors 90 days, plus a 45-day extension. This summer, CVEs will start going public in volumes the NVD has never processed.

Published CVEs per year. 2025 estimated. 2026–2027 projected with AI-driven discovery impact. Source: NVD

CVE volume went from 14,700 in 2017 to over 40,000 in 2024, and that was still at human discovery speed. AI removes that speed limit. Mythos is just the first model to cross this threshold, and WAF vendors who write rules at the current pace will fall behind.

Mythos itself is only deployed defensively right now, and AI is already proving useful on the defense side too. We've been using AI to improve OWASP Core Rule Set regex patterns, and the early results are promising for both detection and false positive reduction. The same technology that finds vulnerabilities can write better WAF rules. But once other models reach Mythos' level, and they will, the volume of exploit knowledge in the wild grows whether defenders are ready or not.

Rogier Fischer, CEO of offensive security company Hadrian:

"The window between disclosure and exploitation is about to collapse. The same models that find these bugs can turn a CVE into a working exploit in minutes, fully autonomously. [...] The security equilibrium is breaking. Prepare accordingly."

The patch lag

The patch lag, the gap between a CVE going public and your WAF blocking it, is about to become the only metric that matters. When hundreds of critical CVEs with working PoCs drop in the same window, every vendor faces a triage problem they've never had.

Cloudflare, AWS WAF, Akamai, and Imperva have large rule engineering teams. They'll adapt, but they'll be stretched. The dozens of other providers, FortiWeb, Azure WAF, F5, open-source options like ModSecurity, Coraza, Open-AppSec, and BunkerWeb, depend on smaller teams or community rulesets. If you don't know your vendor's time-to-protection on new CVEs, you're flying blind.

Who actually gets protected?

Anthropic's Project Glasswing deploys Mythos defensively for about 50 partners: Apple, Amazon, Google, Microsoft, CrowdStrike. But the internet doesn't run on their code. It runs on the projects xkcd 2347 captured perfectly: the critical library one person in Nebraska has been maintaining since 2003.

Mythos found decades-old bugs in OpenBSD and FreeBSD, exactly the foundational software the internet depends on. Those maintainers are not on the Glasswing partner list. The big 50 get the umbrella. Everyone else gets the rain.

Do you know if your WAF actually works?

Not based on vendor marketing. Not based on CVSS scores. Not based on compliance checkboxes.

You know your WAF works when you test it against real exploits. That's what Patchlag does. It continuously tests whether your WAF blocks specific CVEs, including encoded variants and bypass attempts. No trust required. Verified coverage, in hours, not weeks.

Most WAFs fail against real exploits. We're building the tool that shows you which ones don't. We're close to launching. Stay tuned.

WAFplanet Take

Everything the security industry trusts, CVSS scores, patch windows, vendor SLAs, was calibrated for human-speed attackers. That era is over.

The organizations that test their defenses against reality instead of trusting abstractions will be the ones still standing when the CVE wave hits. We built WAFPlanet to help you compare your options. We're building Patchlag to help you verify them.

The flood is coming. Know where you stand.

Frequently Asked Questions

Are CVSS scores still accurate now that AI can exploit vulnerabilities?

No. CVSS scores assume human attackers with limited time and expertise. When AI models can autonomously chain exploits for $2,000, a CVE rated 'medium' with 'high attack complexity' is effectively a 'high' or 'critical.' The scoring framework has not been updated to reflect AI-era attacker capabilities.

Do WAFs stop zero-day attacks?

WAFs can block known attack patterns, but they depend on rule updates from vendors. The patch lag, the time between a CVE going public and your WAF having a rule for it, can range from hours to weeks. With AI-driven vulnerability discovery producing thousands of new CVEs, this gap is becoming the most critical metric in web security.

How can I test if my WAF is actually blocking real exploits?

Most organizations trust vendor claims without independent verification. WAFPlanet is building Patchlag, a service that continuously tests your WAF against specific CVEs, including encoded variants and bypass attempts. This provides verified coverage data instead of assumptions.

What is patch lag and why does it matter?

Patch lag is the time between a vulnerability being publicly disclosed and your WAF having a rule that blocks it. With AI models now producing thousands of zero-days with working exploits, the incoming volume of CVEs will overwhelm vendors who write rules at the current pace. Knowing your vendor's patch lag is now essential.

What is Project Glasswing?

Project Glasswing is Anthropic's program to deploy Claude Mythos Preview for defensive security with about 50 partner organizations including Apple, Amazon, Google, and Microsoft. However, access is limited to these partners, meaning most organizations will face the offensive consequences (more exploits) without the defensive benefit (early scanning).