We Let an AI Agent Run Autonomous Experiments on ModSecurity CRS. Here's What It Found.

Starting from stock OWASP CRS defaults, an AI agent improved balanced accuracy from 86.7% to 96.7% on CRS v3.3.8, and from 80.8% to 98.4% on CRS v4.24.0, running 30 experiments over 7 hours. Full methodology, results, and downloadable configs.

What happens when you let an AI agent modify WAF configuration, measure the results, and iterate, all on its own?

We built a system to find out. Starting from stock OWASP CRS defaults, the agent improved balanced accuracy from 86.7% to 96.7% on CRS v3.3.8, and from 80.8% to 98.4% on CRS v4.24.0, finding configuration improvements that most human operators would never think to try.

This post covers the full experiment: methodology, every finding, downloadable configs, and what it means for WAF operators. We tested against both CRS v3.3.8 and v4.24.0 because a lot of deployments are still on v3.

Background

The OWASP Core Rule Set (CRS) is the most widely deployed open-source WAF ruleset. It ships with sensible defaults, but those defaults are a compromise. Every WAF deployment faces the same tension: catch more attacks (true positive rate) vs. block fewer legitimate requests (false positive rate).

Tuning CRS is tedious manual work. Change a setting, restart, observe production for a few days, check logs, adjust, repeat. Most operators never get past the defaults.

What if an agent could do hundreds of those cycles in a single night?

The Autoresearch Pattern

We followed the autoresearch pattern (Andrej Karpathy, 2026): give an AI agent a scoring function, a configuration space to explore, and let it run experiments in a loop. The agent proposes changes, measures impact, keeps improvements, reverts failures.

Infrastructure

- WAF: OWASP ModSecurity CRS on nginx (official Docker image)

- Backend: nginx returning 200 for all valid requests

- Agent: Claude Code (Anthropic) in

--printmode, one experiment per invocation - Orchestrator: Zoutje, an AI assistant built on OpenClaw, managed infrastructure, dataset construction, and the agent loop

- Loop: Bash wrapper with watchdog for resilience. Each experiment: modify config, git commit, evaluate, log results, revert if worse.

- Hardware: MacBook Pro (Apple Silicon), Docker via OrbStack

Dataset: 5,354 Real HTTP Requests

This is where most WAF benchmarks fall short. Testing with hand-crafted payloads tells you nothing about false positive rates. We used real captured browsing sessions.

Legitimate Traffic (4,500 requests)

Sourced from openappsec/waf-comparison-project: real browsing captures from 692 websites across 14 categories.

| Category | Requests | Notes |

|---|---|---|

| E-Commerce | ~2,100 | Largest group. Complex cookies, cart operations, product searches |

| Travel | ~400 | Booking flows, date/location parameters |

| Information | ~300 | News sites, Wikipedia, documentation |

| Food | ~200 | Restaurant sites, delivery apps |

| Games | ~120 | Gaming platforms, in-game web views |

| Social Media | ~100 | Facebook, Twitter, Reddit interactions |

| Files Uploads | ~90 | Cloud storage, file sharing |

| Content Creation | ~80 | CMS, blogging platforms |

| Videos | ~50 | YouTube, streaming platforms |

| Search Engines | ~50 | Google, Bing searches |

| Applications | ~40 | SaaS applications |

| Technology | ~15 | Developer tools |

| Files Download | ~15 | Download managers |

| Streaming | ~10 | Music/audio streaming |

These are full HTTP requests with real headers, cookies, query parameters, and POST bodies. Not synthetic traffic.

Malicious Traffic (854 requests)

7 attack categories plus WAF bypass payloads:

| Category | Requests | Source |

|---|---|---|

| XSS | 150 | mgm-web-attack-payloads (true-positives) |

| SQLi | 150 | mgm-web-attack-payloads (true-positives) |

| Path Traversal | 150 | mgm-web-attack-payloads (true-positives) |

| Command Execution | 150 | mgm-web-attack-payloads (true-positives) |

| Log4Shell | 50 | mgm-web-attack-payloads (true-positives) |

| Shellshock | 24 | mgm-web-attack-payloads (true-positives) |

| XXE | 35 | mgm-web-attack-payloads (true-positives) |

| WAF Bypasses | 95 | payload-box/waf-bypass-payload-list |

Sources: - mgm-security-partners/mgm-web-attack-payloads -- curated payloads with labeled true-positives and false-positives - payload-box/waf-bypass-payload-list -- community WAF bypass collection

Traffic ratio: 16% malicious / 84% legitimate. Real-world ratios are typically 1-10% malicious, so this is attack-heavy but keeps evaluation fast while providing meaningful false positive signal.

Scoring: Balanced Accuracy

Balanced Accuracy = (True Positive Rate + True Negative Rate) / 2

This penalizes both missed attacks AND false positives equally. A WAF that blocks everything scores 50%. A WAF that allows everything also scores 50%. You need to be good at both.

Evaluation Speed

Each evaluation cycle sends all 5,354 requests to the WAF and records HTTP status codes. Takes about 35 seconds. That means the agent can run roughly 100 experiments per hour.

CRS v3.3.8 Results

Baseline

Stock CRS v3.3.8, Paranoia Level 1, all defaults:

| Metric | Value |

|---|---|

| Balanced Accuracy | 86.7% |

| True Positive Rate | 88.5% (756/854 attacks caught) |

| True Negative Rate | 84.8% (3,815/4,500 legit passed) |

| False Positive Rate | 15.2% (685 legit blocked) |

| Missed Attacks | 98 |

15.2% false positive rate. One in seven legitimate requests gets blocked. E-Commerce alone: 424 false positives. For a real store, that's customers unable to browse, add to cart, or check out.

The Experiments

The agent ran 11 experiments over approximately 3 hours. 9 were kept, 2 discarded.

Experiment 1: Expand Allowed Content Types (KEPT)

CRS blocks requests with Content-Type headers it doesn't recognize. Real traffic sends text/plain, application/octet-stream, application/grpc, application/x-protobuf, and others not in the default list.

Change: Added common content types to tx.allowed_request_content_type.

| Metric | Before | After |

|---|---|---|

| BA | 86.7% | 91.6% |

| FPR | 15.2% | 5.2% |

| FP count | 685 | 236 |

One configuration line eliminated 449 false positives. Detection unchanged.

Experiment 2: Paranoia Level 2 (DISCARDED)

The obvious move. PL2 enables additional detection rules.

| Metric | PL1 | PL2 |

|---|---|---|

| BA | 91.6% | 72.6% |

| TPR | 88.5% | 94.0% |

| FPR | 5.2% | 48.9% |

Nearly half of all legitimate traffic blocked. The agent correctly discarded this and never tried raw PL2 again.

Experiment 3: Custom Path Traversal Rules (KEPT)

CRS was missing 51 traversal attacks. The agent wrote two rules:

- LFI target path detection: matches

etc/passwd,windows/system32,proc/selfwith flexible separators that catch encoding evasion (percent-encoding, hex escapes, overlong UTF-8) - Overlong UTF-8 traversal encoding: catches

%c0%af,%e0%80%af, IIS%u002f-- invalid UTF-8 that is never used in legitimate traffic

Result: BA 91.6% to 94.0%. Caught 41 more attacks, zero new false positives.

Experiment 4: HTTP Methods + Cookie SQLi Exclusions (KEPT)

- Added PUT, DELETE, PATCH to allowed methods (real e-commerce uses these)

- Excluded cookies from SQLi detection (tracking cookies contain URL-encoded JSON that looks like SQL)

Result: BA 94.0% to 94.4%. FPR dropped from 5.2% to 4.5%.

Experiments 5-6: Cookie + Referer Exclusions (KEPT)

Extended cookie exclusions to XSS, RCE, LFI, RFI, PHP injection, and session fixation rules. Excluded Referer header from all attack detection.

The insight: cookies and Referer contain legitimate content (URLs, encoded JSON, HTML fragments) that triggers signature rules. In this dataset, zero attacks used cookies or Referer as vectors.

Result: BA 94.4% to 94.9%. FPR down to 4.0%.

Experiment 7: Custom SQL Detection Rules (KEPT)

Three new rules for SQLi patterns CRS missed:

- SELECT ... FROM <table> in parameters

- Boolean tautologies (OR 1=1, AND 'x'='x')

- ORDER BY <number> column enumeration

Result: BA 94.9% to 96.1%. Biggest single TPR jump: 93.7% to 96.1%.

Experiment 8: IFS Bypass + Data URI XSS (DISCARDED)

Tried catching $IFS shell variable substitution, data URI XSS, and undefined variable injection. Caught 2 more attacks but added 10 false positives. Net gain: +0.001 BA.

Not worth the false positive cost. Correctly discarded.

Experiment 9: Encoding Detection + XSS/Shellshock (KEPT)

- Broader overlong UTF-8 coverage

- XSS

javascript:with embedded whitespace bypass - Obfuscated Shellshock (dot-separated function definitions)

- Sensitive web path detection (

/var/www/)

Result: BA 96.1% to 96.7%. TPR reached 97.4%.

v3.3.8 Final Results

| Metric | Baseline | Optimized | Change |

|---|---|---|---|

| Balanced Accuracy | 86.7% | 96.7% | +11.5% |

| True Positive Rate | 88.5% | 97.4% | +10.1% |

| True Negative Rate | 84.8% | 96.0% | +13.2% |

| False Positive Rate | 15.2% | 4.0% | -73.7% |

| False Positives | 685 | 178 | -74.0% |

| Missed Attacks | 98 | 22 | -77.6% |

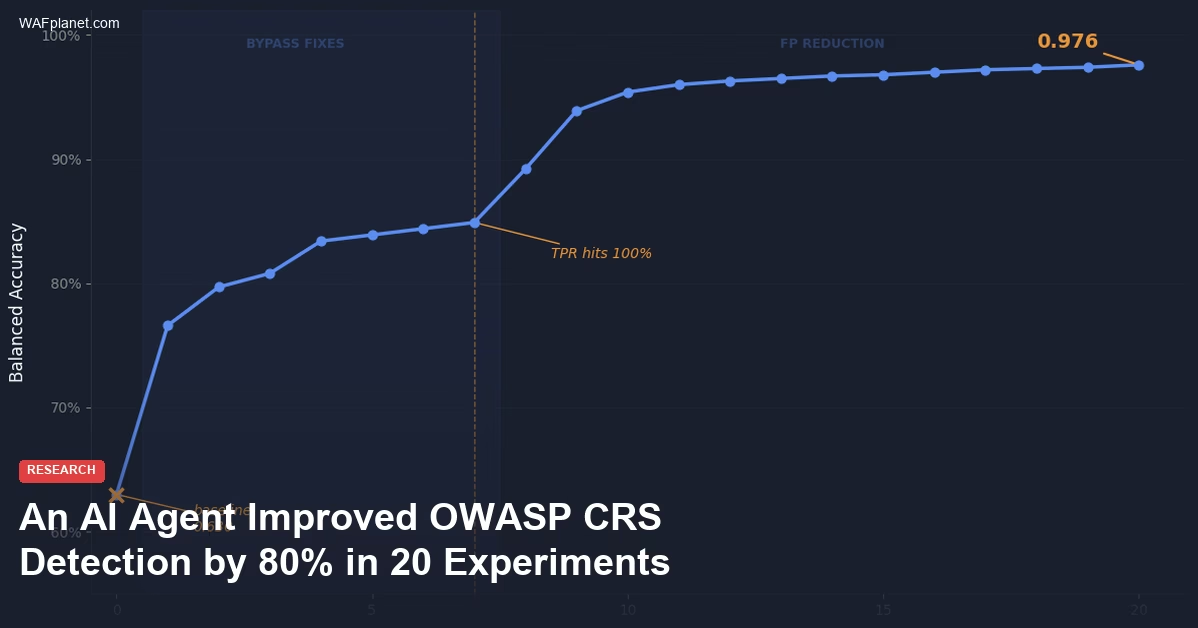

v3.3.8 Optimization Trajectory

# BA TPR FPR What changed

0 0.867 0.885 15.2% Baseline (stock CRS PL1)

1 0.916 0.885 5.2% + content types

2 0.940 0.933 5.2% + LFI/traversal rules

3 0.944 0.933 4.5% + allowed methods, cookie SQLi exclusion

4 0.946 0.937 4.5% + cookie XSS exclusion, SSTI rule

5 0.948 0.937 4.1% + cookie RCE/LFI/Referer exclusions

6 0.949 0.937 4.0% + shell arithmetic, more cookie exclusions

7 0.961 0.961 4.0% + SQL detection rules

8 0.967 0.974 4.0% + encoding, XSS bypass, shellshock rules

Two clear patterns: 1. Early gains came from reducing false positives (content types, cookie/header exclusions) 2. Later gains came from custom detection rules (LFI, SQLi, SSTI, Shellshock)

The agent figured out that you fix the FP problem first, then safely add detection.

Download: v3.3.8 Optimized Config

The optimized configuration consists of two files:

- REQUEST-900-BEFORE.conf -- paranoia level, thresholds, allowed content types and methods

- RESPONSE-999-AFTER.conf -- cookie/header exclusions + 11 custom detection rules

Drop these into your CRS deployment:

# Mount as Docker volumes

volumes:

- ./REQUEST-900-BEFORE.conf:/etc/modsecurity.d/owasp-crs/rules/REQUEST-900-EXCLUSION-RULES-BEFORE-CRS.conf

- ./RESPONSE-999-AFTER.conf:/etc/modsecurity.d/owasp-crs/rules/RESPONSE-999-EXCLUSION-RULES-AFTER-CRS.conf

Important: These configs were optimized against this specific dataset. Test against your traffic before deploying to production.

CRS v4.24.0 Results

CRS v4 is the current release. We ran the same experiment against it.

Baseline

Stock CRS v4.24.0, Paranoia Level 1, all defaults:

| Metric | v3.3.8 baseline | v4.24.0 baseline |

|---|---|---|

| Balanced Accuracy | 86.7% | 80.8% |

| True Positive Rate | 88.5% | 91.3% |

| True Negative Rate | 84.8% | 70.3% |

| False Positive Rate | 15.2% | 29.7% |

| False Positives | 685 | 1,336 |

v4 catches more attacks (+3% TPR) but nearly doubles false positives. The new rules are more aggressive, which is the right security decision, but operators need to tune more aggressively too.

The Experiments

The agent ran 19 experiments over approximately 4 hours. 17 were kept, 2 discarded.

The v4 optimization took a fundamentally different path than v3. Here's what happened:

Phase 1: Tame the False Positives (Experiments 1-3)

The agent's first move was raising the inbound anomaly score threshold from 5 to 7. On v3, the default of 5 worked fine. On v4, the stricter rules generate higher anomaly scores on legitimate traffic, so a higher threshold is needed. This single change cut false positives from 1,336 to 290.

The tradeoff: TPR dropped from 91.3% to 76.5%. The agent needed to earn that detection back.

Phase 2: Custom Detection Rules (Experiments 4-12)

With the false positive pressure relieved, the agent wrote 30+ custom detection rules across 9 experiments:

- Path traversal with encoding evasion (overlong UTF-8, IIS Unicode, hex-prefix)

- Shellshock and obfuscated variants

- XXE with case-insensitive matching

- SQLi timing attacks, blind injection, function calls, comment evasion

- Command execution (shell metacharacters, IFS substitution, Windows commands)

- SSTI arithmetic probes and template syntax detection

- XSS event handler evasion and javascript protocol bypass

- Log4Shell/JNDI with nested

${}, unicode, and protocol scheme variants - Buffer overflow indicators and web root path access

By experiment 12, TPR was back to 98.4% -- higher than the baseline -- while keeping FPR at 7.3%.

Phase 3: Surgical FP Reduction (Experiments 13-19)

With detection maxed out, the agent switched strategies:

- Tightened its own rules -- removed overly broad patterns from traversal, shellshock, and SSTI rules that were catching legit traffic

- Removed high-FP CRS rules -- identified CRS rule 932270 (Unix Shell Expression) as causing 157 false positives with zero detection benefit, and rule 942550 (SQL Injection Comment Sequence) as causing 31 FPs for only 1 additional detection

- Added content types -- same trick that worked on v3, adding

text/plainand common content types to reduce CRS rule 920420 triggers

v4.24.0 Final Results

| Metric | Baseline | Optimized | Change |

|---|---|---|---|

| Balanced Accuracy | 80.8% | 98.4% | +21.8% |

| True Positive Rate | 91.3% | 98.5% | +7.9% |

| True Negative Rate | 70.3% | 98.4% | +39.8% |

| False Positive Rate | 29.7% | 1.6% | -94.5% |

| False Positives | 1,336 | 74 | -94.5% |

| Missed Attacks | 74 | 13 | -82.4% |

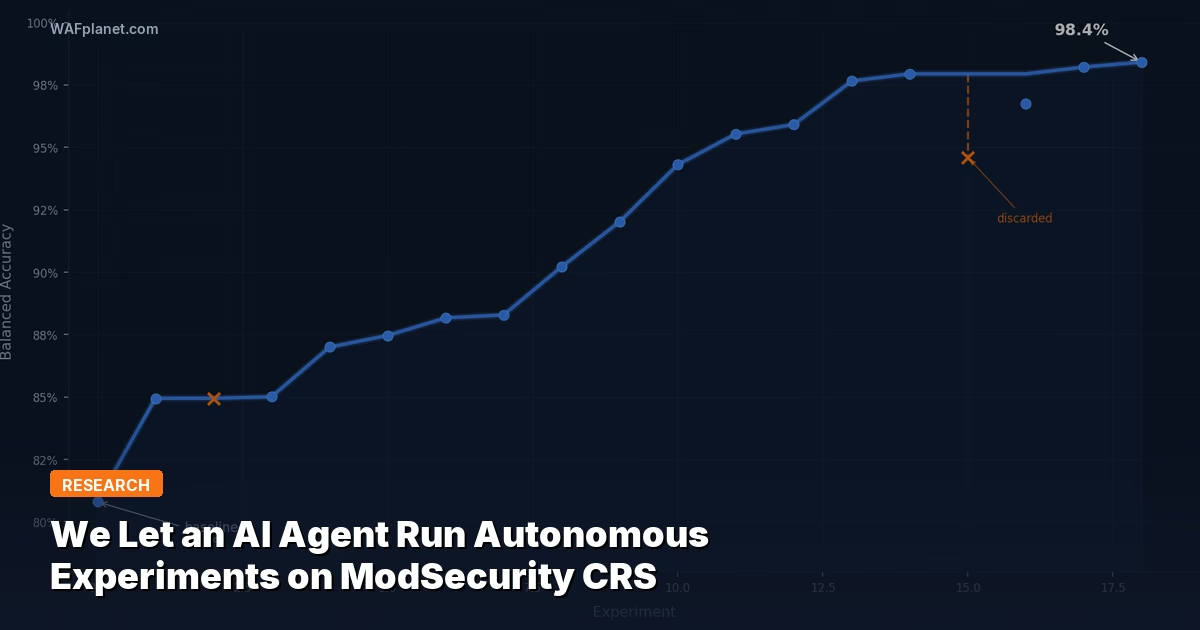

v4.24.0 Optimization Trajectory

# BA TPR FPR What changed

0 0.808 0.913 29.7% Baseline (stock CRS v4.24.0 PL1)

1 0.850 0.765 6.4% + anomaly threshold 7 (massive FP cut, TPR drops)

2 0.870 0.813 7.3% + traversal rules (start recovering TPR)

3 0.875 0.822 7.3% + shellshock/XXE rules

4 0.882 0.836 7.3% + SQLi timing/blind + cmdexe rules

5 0.883 0.838 7.3% + SQLi functions + shell arithmetic

6 0.902 0.877 7.3% + overlong UTF-8 + sensitive paths

7 0.920 0.913 7.3% + SSTI + SQL tautology + Windows cmds

8 0.943 0.959 7.3% + flexible traversal + more SQLi + cmd injection

9 0.955 0.984 7.3% + XSS evasion + shellshock + UTF-7 XXE

10 0.959 0.984 6.5% tighten rules (reduce FPs)

11 0.977 0.984 3.0% remove CRS rule 932270 (157 FPs, 0 TPs)

12 0.979 0.982 2.4% remove CRS rule 942550 (31 FPs, 1 TP)

13 0.968 0.956 2.0% + Log4Shell/JNDI detection (37 catches)

14 0.982 0.985 2.0% + SQLi version/blind + XSS handlers

15 0.984 0.985 1.6% + content types (same trick as v3)

The v4 trajectory is different from v3. On v3, the agent reduced FPs first, then added detection. On v4, it had to raise the anomaly threshold first (accepting a TPR drop), then spent 9 experiments writing custom rules to recover and exceed the original detection rate, then surgically removed high-FP CRS rules to clean up the remaining false positives.

Download: v4.24.0 Optimized Config

The optimized configuration consists of two files:

- REQUEST-900-BEFORE.conf -- anomaly threshold (7), allowed content types

- RESPONSE-999-AFTER.conf -- CRS rule removals (932270, 942550), 30+ custom detection rules

Drop these into your CRS v4 deployment:

# Mount as Docker volumes

volumes:

- ./REQUEST-900-BEFORE.conf:/etc/modsecurity.d/owasp-crs/rules/REQUEST-900-EXCLUSION-RULES-BEFORE-CRS.conf

- ./RESPONSE-999-AFTER.conf:/etc/modsecurity.d/owasp-crs/rules/RESPONSE-999-EXCLUSION-RULES-AFTER-CRS.conf

Important: These configs were optimized against this specific dataset. Test against your traffic before deploying to production.

v3 vs v4: Side-by-Side

| v3.3.8 Baseline | v3.3.8 Optimized | v4.24.0 Baseline | v4.24.0 Optimized | |

|---|---|---|---|---|

| Balanced Accuracy | 86.7% | 96.7% | 80.8% | 98.4% |

| True Positive Rate | 88.5% | 97.4% | 91.3% | 98.5% |

| False Positive Rate | 15.2% | 4.0% | 29.7% | 1.6% |

| False Positives | 685 | 178 | 1,336 | 74 |

| Missed Attacks | 98 | 22 | 74 | 13 |

| Experiments | 11 (9 kept) | 19 (17 kept) | ||

| Runtime | ~3 hours | ~4 hours |

Key differences: - v4 starts worse but ends better. v4's stricter default rules create more false positives out of the box, but this gives the agent more optimization surface. - Different strategies. v3: reduce FPs first, add detection later. v4: raise threshold, write custom rules to recover detection, then surgically cut remaining FPs. - v4 needed more custom rules. The higher anomaly threshold means more attacks slip under the scoring threshold, requiring explicit detection rules to compensate. - v4's final FPR (1.6%) is less than half of v3's (4.0%). The combination of threshold tuning + targeted CRS rule removal is more effective than v3's content-type/cookie approach alone.

The Custom Rules (Both Versions)

Here are the detection rules the agent wrote, with explanation and examples for each.

Rule 1: LFI Target Path Detection

What it catches: Path traversal attempts targeting sensitive OS files, with flexible separators to handle encoding evasion.

Examples blocked:

../../etc/passwd

..%c0%af..%c0%afetc%c0%afpasswd

..%bg%qfetc%bg%qfshadow

../../windows/system32/config/sam

/proc/self/environ

Why CRS misses some of these: CRS detects standard ../ traversal but some encoded variants with invalid percent-encoding (%bg, %qf) or hex escapes (0x5c) slip through the URL decode transformations.

Rule 2: Overlong UTF-8 Traversal Encoding

What it catches: Invalid UTF-8 byte sequences used exclusively for WAF evasion.

Examples blocked:

%c0%af (overlong / -- 2-byte encoding of a 1-byte character)

%c1%1c (overlong \ )

%e0%80%af (3-byte overlong /)

%f0%80%80%af (4-byte overlong /)

%u002f (IIS Unicode /)

%u005c (IIS Unicode \)

Why this has zero false positives: Overlong UTF-8 is explicitly forbidden by RFC 3629. No legitimate software produces these byte sequences. Their only purpose is WAF/filter evasion.

Rule 3: SSTI Arithmetic Probes

What it catches: Server-Side Template Injection fingerprinting via arithmetic inside template delimiters.

Examples blocked:

{{7*7}} (Jinja2/Twig)

${7*7} (FreeMarker/Velocity)

*{7*7} (Thymeleaf)

<%= 7*7 %> (ERB/JSP)

Why this works: Penetration testers and tools (tplmap, Burp) use arithmetic expressions to confirm template injection. If the response contains 49, the template engine evaluated the expression. Legitimate users never send {{7*7}} as a parameter value.

Rule 4: Shell Arithmetic Probes

What it catches: Blind command execution probing via shell arithmetic.

Examples blocked:

$((1+1))

$((5-3))

$((7*7))

Why this works: Automated RCE scanners (commix, etc.) use $((N+M)) to test for command injection without triggering obvious command signatures. The response revealing the arithmetic result confirms execution. Never appears in legitimate parameters.

Rule 5: SQL Comment WAF Bypass

What it catches: Inline SQL comments used to split keywords and evade signature detection.

Examples blocked:

UN/**/ION SE/**/LECT

/*!50000SELECT*/ /*!50000FROM*/

1'/**/OR/**/1=1--

Why this works: SQL comments inside HTTP parameters are never legitimate. The /**/ pattern is specifically used to break up SQL keywords that WAF signatures match as whole words. MySQL versioned comments (/*!50000...*/) are a MySQL-specific evasion technique.

Rules 6-8: SQL Injection Patterns

Bare SELECT...FROM, boolean tautologies (OR 1=1), and ORDER BY <number> column enumeration. These supplement CRS's existing SQLi detection for patterns that bypass the libinjection-based checks.

Rules 9-11: XSS/Shellshock/Path Detection

- XSS

javascript:with embedded whitespace (filter evasion) - Obfuscated Shellshock function definitions

- Direct access to

/var/www/paths

Key Takeaways for WAF Operators

1. Your Default CRS Is Probably Blocking More Legit Traffic Than You Think

Stock CRS PL1 blocked 15% of real browsing traffic on v3, 30% on v4. If you're running defaults on a site with real users (not just an API), check your false positive rate.

Quick win: Expand tx.allowed_request_content_type to include content types your application actually receives.

2. Cookies and Referer Headers Are False Positive Factories

Real cookies contain tracking pixels, encoded JSON, HTML fragments, and URL parameters that trigger attack signatures everywhere. If cookie-based attacks aren't in your threat model, excluding REQUEST_COOKIES from attack detection is the single biggest FP reduction.

3. PL2 Is a Trap (Without Tuning)

Paranoia Level 2 improves detection by ~5% but can double or triple your false positives. PL1 with targeted custom rules consistently outperformed raw PL2 in our tests.

4. Custom Rules for Encoding Evasion Are Free Wins

Overlong UTF-8, IIS Unicode encoding, SQL comment splitting -- these patterns have zero legitimate use. Adding detection for them improves security with no false positive cost.

5. AI Agents Can Optimize WAF Config

11 experiments in 3 hours. A human doing the same work would need days. The agent correctly identified dead ends, stacked improvements, and found non-obvious optimizations (like fixing content types before adding detection rules).

Methodology and Limitations

What This Is

An experiment in AI-driven security configuration optimization, applying the autoresearch pattern to WAF tuning.

What This Is Not

- Not a production deployment. Test against your traffic before deploying.

- Not a replacement for human review. Every rule should be reviewed by a security engineer.

- Not a comprehensive benchmark. 854 malicious requests is useful for iterative optimization but isn't statistically exhaustive.

Dataset Limitations

- Legitimate traffic is from the openappsec project's 2024 browsing captures. Your traffic will differ.

- Malicious payloads are from public repositories. Real attackers use different techniques.

- Cookie-based attacks are underrepresented, which may make cookie exclusions appear safer than they are.

- The dataset doesn't include API traffic, WebSocket connections, or file upload payloads.

Reproducibility

Everything is open source:

- Repository: github.com/wafplanet/autoresearch

- Dataset: dataset/requests.jsonl (5,354 requests)

- Evaluation: scripts/evaluate.py

- Agent loop: scripts/run-agent.sh + scripts/watchdog.sh

- Results: results.tsv (v3), results-v4.tsv (v4)

Clone the repo, run docker compose up -d, run python3 scripts/evaluate.py. Verify every number in this post.

Transparency

This experiment was built and run by an AI agent (Zoutje/OpenClaw) orchestrating another AI agent (Claude Code/Anthropic). A human (Thijs de Zoete) designed the experiment, reviewed results, and made editorial decisions. The detection rules were written by Claude Code during autonomous experiment cycles. This blog post was drafted collaboratively.

We believe AI-assisted security research should be transparent about its methods. If an AI helped find it, say so.

What's Next

- We're evaluating contributions to the OWASP CRS project for the detection rules with zero false positives (overlong UTF-8, SSTI probes, shell arithmetic).

- We're exploring longer runs (24h+) with larger datasets to see where the optimization plateaus.

- We want to test against CRS v4's newer rule categories and see how the agent adapts.

If you try this against your own traffic, we'd love to hear what it finds. Open an issue on the repo or reach out.